It’s 8:15 AM on a Monday. You open your inbox to find a subject line that makes your stomach drop: “URGENT: Traffic Drop on Our Main Service Page!”

You spend the next three hours digging through analytics, crawl data, and search console reports, only to pinpoint the culprit: a new blog post, written by the client’s marketing intern, is now competing with their most important money page for the same keyword.

It’s a classic case of keyword cannibalization, and it’s been silently chipping away at their rankings for weeks.

Sound familiar? For agencies managing multiple clients, this reactive, fire-fighting mode is an exhausting and inefficient way to work. While you’re busy putting out one fire, another could be starting in a different client’s account.

But what if you could get an alert the moment that new blog post was published? What if you could build a system that acts as a 24/7 watchdog for your entire client portfolio, flagging these “silent killers” of SEO performance before they can impact the bottom line?

This is where proactive AI alerts are changing the game for agencies.

The Hidden Threats: Keyword Cannibalization and Index Bloat Explained

Before we dive into the solution, let’s quickly revisit two issues that often fly under the radar until it’s too late.

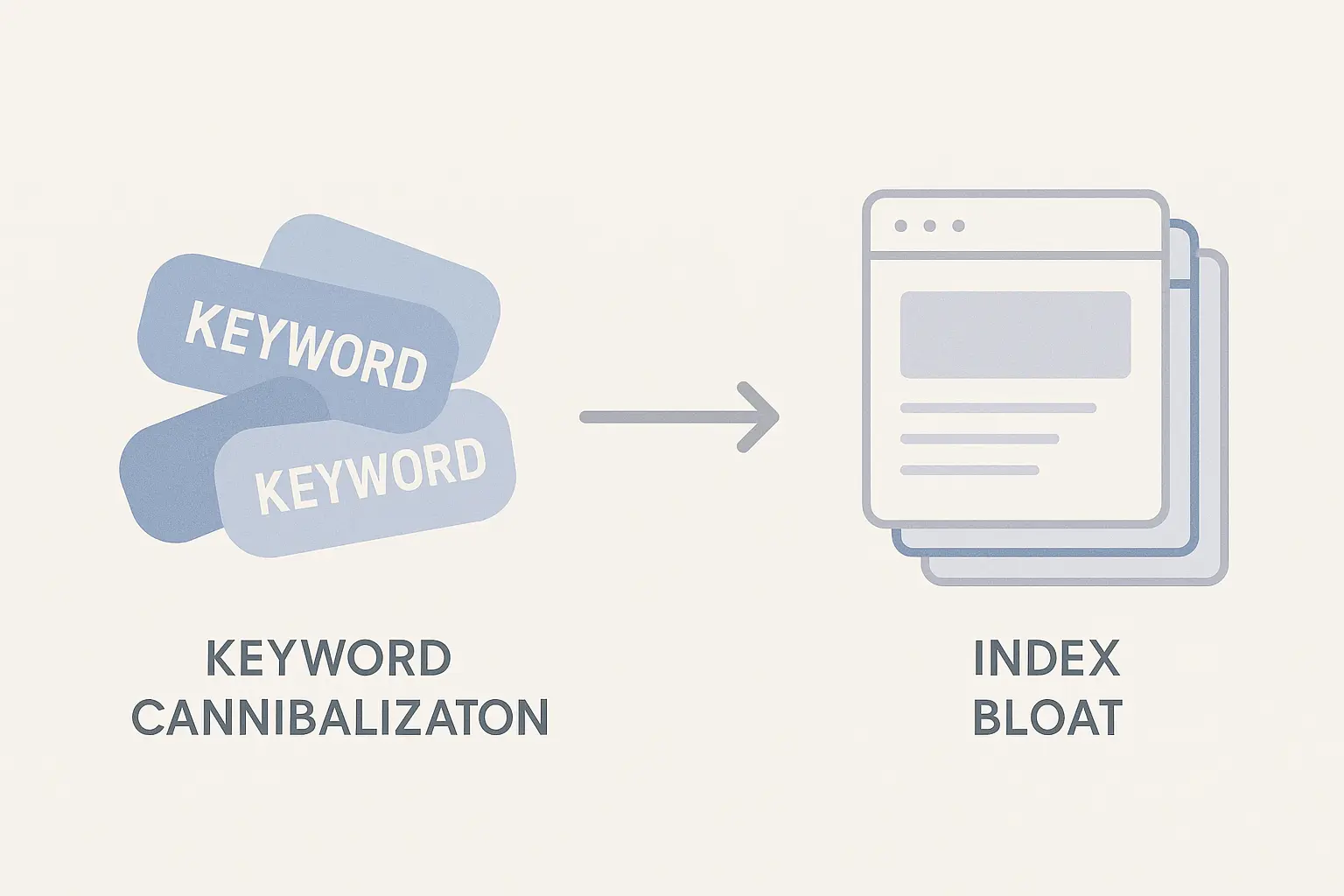

What is Keyword Cannibalization?

Imagine you have two salespeople from your company trying to sell the same product to the same customer at the same time. They end up confusing the customer and splitting the potential commission. In the end, neither of them makes a strong case, and a competitor might swoop in and win the deal.

That’s exactly what keyword cannibalization does. It happens when multiple pages on a single website compete for the same keyword or search intent. Google gets confused about which page is the most authoritative, so it often splits the ranking power between them.

The result? Instead of one page ranking strongly on page one, you might have two pages languishing on pages two and three.

This is more common than you’d think. Research from Semrush consistently shows that keyword cannibalization is a widespread issue that causes ranking volatility and diluted authority, even for major brands.

What is Index Bloat?

Now, imagine a massive library where, alongside valuable, well-researched books, there are thousands of duplicate pamphlets, author-less notes, and empty journals. When you ask the librarian for the best book on a specific topic, they have to sift through all that junk to find the real gems. Their job becomes harder, and they might even miss the best book entirely.

Index bloat forces search engines to do the same with a client’s website. It occurs when a site has a large number of low-value, thin, or duplicate pages indexed by Google. This problem can stem from several common sources:

- Auto-generated tag, category, or archive pages from a CMS

- Faceted navigation URLs from e-commerce filters (e.g., ?color=blue&size=medium)

- Unused media attachment pages

- Old, un-redirected staging or dev site pages

As Google’s own John Mueller has warned, having a significant number of low-quality pages in the index can negatively impact a site’s overall crawling and ranking performance. It wastes your client’s “crawl budget” and dilutes the authority of their most important pages.

Why Manual Checks Are a Losing Battle for Agencies

For a single website, you might be able to spot these issues with a periodic manual audit. But when you’re responsible for 5, 10, or 50+ clients? It’s impossible.

The reality is, these problems are often introduced unintentionally. A client’s content team publishes a new post. A developer pushes a site update. A new plugin is installed. Each action carries a risk of creating a new cannibalization or bloat issue.

Checking every client site, every week, for every possible issue simply isn’t a scalable strategy. You need a top-down view that flags anomalies across your entire portfolio, allowing you to focus your expertise where it’s needed most.

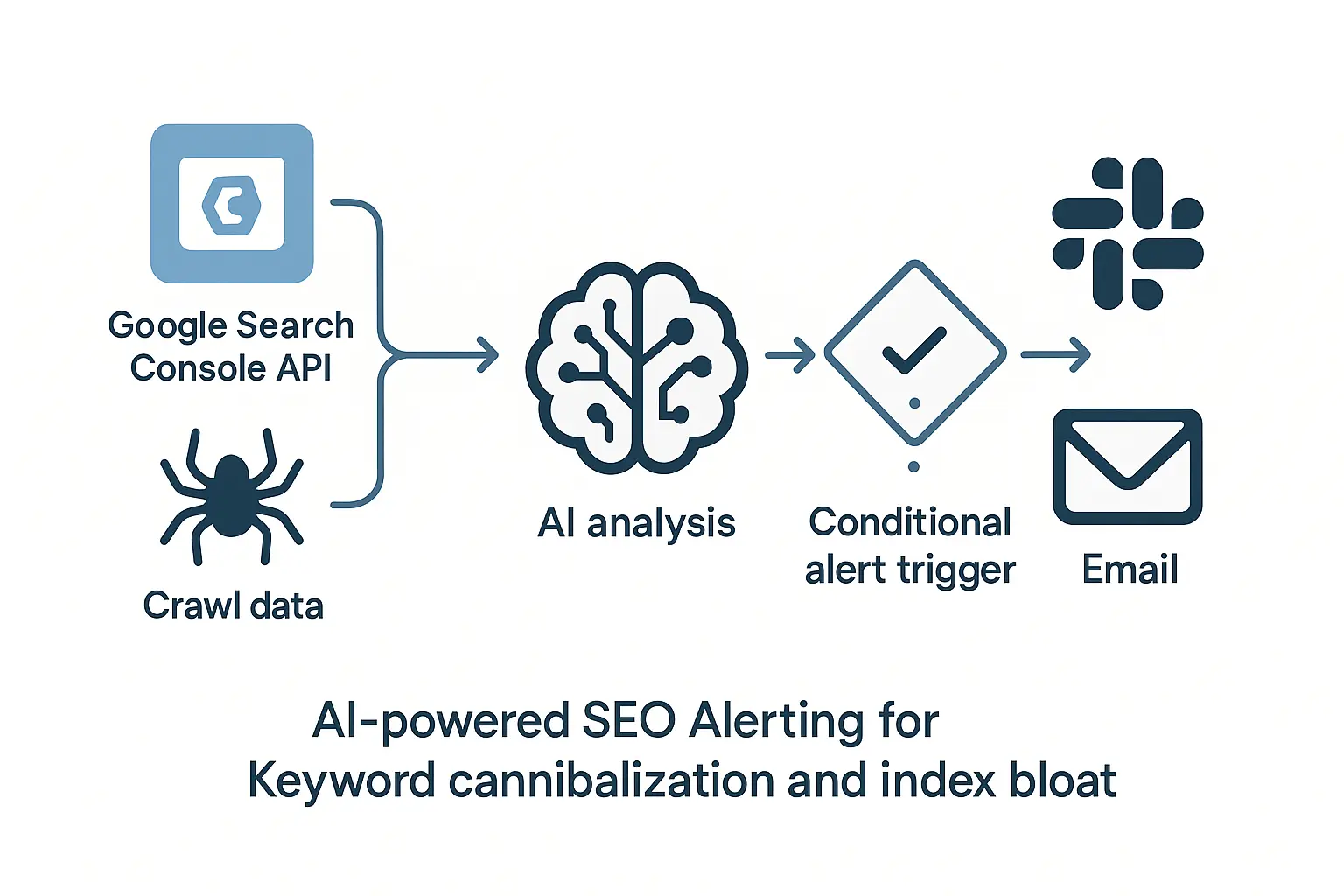

From Reactive Fixes to Proactive AI Monitoring

The solution is to shift from detecting drops to preventing them. Instead of using tools that tell you a ranking has already fallen, you need AI-powered automation to alert you to the underlying conditions that cause rankings to fall.

This isn’t about replacing human strategy; it’s about empowering it. By automating detection, you free up your team to focus on solving problems and driving growth.

Setting Up AI Alerts for Keyword Cannibalization

A smart alert system does more than just check if two URLs rank for the same term. It understands intent and content similarity. Here’s the kind of rule you can set up:

- The Trigger: Alert me when two or more pages on client-site.com have a semantic content similarity score above 85% AND are both optimized for the same primary keyword cluster.

- Why It Works: This alert ignores healthy instances, like a blog post targeting an informational keyword and a service page targeting a transactional one. It specifically hones in on unintentional, direct competition, flagging it for review the moment the second page is indexed.

Setting Up AI Alerts for Index Bloat

For index bloat, the AI monitors the rate and type of URL indexation. It establishes a baseline of what’s “normal” for a client’s site and flags deviations.

- The Trigger: Alert me when the total number of indexed URLs for client-site.com increases by more than 5% week-over-week without a corresponding increase in sitemap-submitted URLs.

- Why It Works: This simple rule can instantly catch issues like a misconfigured plugin creating thousands of parameter-based URLs or a noindex tag being accidentally removed from an archive section. It signals that something is being indexed that shouldn’t be, prompting a quick investigation.

Automating this kind of oversight is a cornerstone of effective white-label SEO services, as it allows an agency partner to safeguard client performance at scale without bogging down your internal team with endless manual checks.

The Agency Advantage: Scalability, Efficiency, and Client Retention

Implementing a proactive alert system isn’t just about better SEO; it’s about building a better agency.

- True Scalability: You can confidently onboard new clients, knowing your automated systems are monitoring their technical health from day one.

- Increased Efficiency: Your team’s valuable time is spent on high-impact strategy and client communication, not on tedious, manual audits.

- Enhanced Client Retention: You transform your client relationship from a reactive service provider to a proactive strategic partner. Reporting on “disasters averted” is an incredibly powerful way to demonstrate value far beyond a simple rankings report.

Frequently Asked Questions About Proactive SEO Monitoring

Q: Isn’t some keyword cannibalization okay?

A: Absolutely. It’s common to have a blog post for an informational intent (“what is a mortgage”) and a service page for a transactional intent (“get a mortgage quote”) that touch on the same topic. The problem is unintentional cannibalization, where two pages target the exact same intent, confusing Google and splitting your authority. Smart AI alerts can differentiate between the two.

Q: How do I fix index bloat once it’s detected?

A: Once the alert points you to the problem URLs, the fix usually involves a combination of tools: using the noindex tag on low-value pages, updating your robots.txt file to block crawlers from certain sections, or using 301 redirects to consolidate thin pages into a more authoritative one. The alert is the critical first step—making you aware the problem exists.

Q: Can’t I just use Google Search Console for this?

A: Google Search Console is an essential tool, but it’s largely reactive. It will show you a coverage issue or a performance drop after it has already happened. The goal of AI alerts is to be predictive, flagging the root cause of a potential problem before it shows up in a GSC report.

Q: Does setting this up require a developer or complex software?

A: It used to, but not anymore. Modern platforms for SEO outsourcing for agencies are designed to be user-friendly. They provide the infrastructure to set up these kinds of sophisticated, portfolio-wide monitoring rules without needing to write a single line of code.

Start Building a More Resilient SEO Offering

The future of successful agency SEO isn’t just about winning rankings; it’s about protecting them. By moving from a reactive to a proactive model, you create a more stable, scalable, and valuable service for your clients. You stop being a fire-fighter and become the architect of a fire-proof house.

This shift doesn’t require more hours or a bigger team. It requires a smarter approach—one that leverages AI and automation to handle the tedious work of monitoring, freeing you to do what you do best: grow your clients’ businesses and your own.